Open-Source AI Orchestration Tools: Complete Guide

Table of Contents

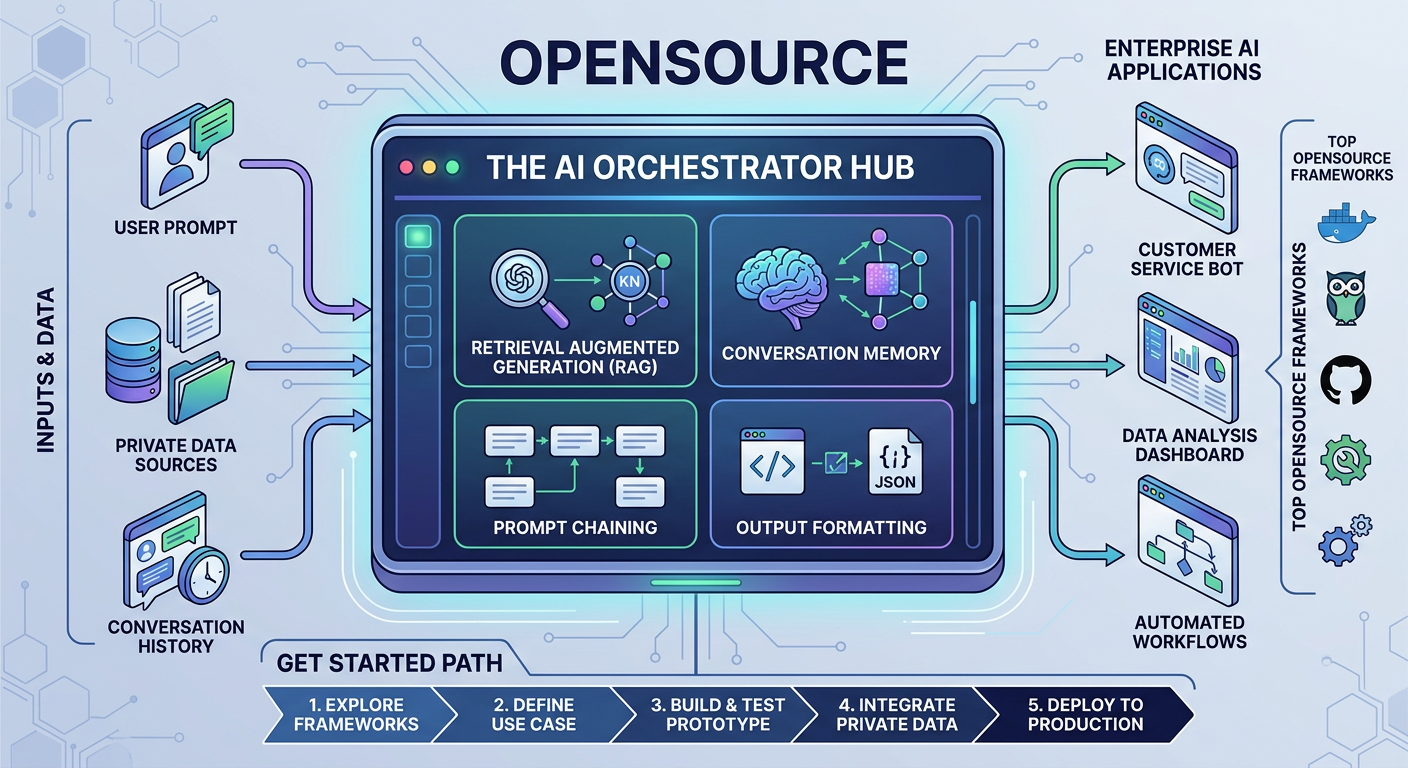

The phase of plugging a single AI API into your product doesn't last long. As usage grows, costs spike, latency becomes unpredictable, and security teams start asking hard questions about where sensitive data is flowing. That's where opensource AI orchestration changes the equation. Instead of wiring your application directly to external models, an orchestration layer introduces structure—routing requests intelligently, grounding responses in private data, managing memory, enforcing guardrails, and allowing you to swap models without rewriting your entire stack. This guide explores the leading opensource AI orchestration tools from LangChain and LlamaIndex to Haystack and Semantic Kernel and explains how to choose the right framework based on your architecture and goals. Whether you're pursuing selfhosted AI orchestration for stronger data control or looking to reduce API spend through smarter routing, adopting opensource AI orchestration infrastructure gives your team flexibility, cost control, and long term ownership of your AI roadmap.

Think back to the early days of the generative AI boom. Adding AI to a product felt almost like magic, and the process was deceptively simple. Your engineering team would grab an API key from a major provider like OpenAI or Anthropic, write a few quick lines of code to pass a user's prompt over to their servers, and wait for the intelligent text to flow back into your application. It was an incredible party trick that looked great in a product demo. But as these simple prototypes grew up into real, production ready enterprise tools, a harsh reality set in. Real B2B applications need more than just a clever chatbot. They need to ground the AI's answers in your company's private data, a process known as retrieval augmented generation (RAG). They need to remember past conversations, chain multiple complex prompts together, and format the AI's output perfectly so your software can read it. Suddenly, that clean, simple codebase turned into a tangled mess of custom scripts and fragile integrations that broke every time a user asked a weird question. This is the exact moment when the critical need for open source AI orchestration becomes obvious. By putting a standardized management layer between your software and the AI models, your team can finally take back control. Software companies are quickly moving toward these opensource solutions to escape the high costs and strict limitations of proprietary vendors. Instead of renting someone else's infrastructure, they are building custom, flexible systems that can grow with their customer base. What is more, looking into free AI orchestration is not just about pinching pennies; it is about protecting your intellectual property and keeping your clients' sensitive data safe on your own servers. In this guide, we are going to walk through the real world story of a SaaS company upgrading its architecture, look at the best frameworks available today, and map out exactly how you can implement these tools in your own business.

The Breaking Point: Why Simple API Calls Fail in B2B

To really grasp why these frameworks are life savers, let us look at the story of a fictional, but very realistic, B2B SaaS company called OmniMetrics. OmniMetrics builds analytics software for human resources departments. A year ago, their leadership decided they needed an AI assistant to help users search through complex employee datasets. Their engineers took the easy route first, plugging in a direct API to a massive, external language model. At first, launch was a huge success. But as thousands of users started asking the AI to summarize massive quarterly reports, things started to crack. The monthly bill from their AI provider skyrocketed because they were paying for every single word generated. When the external AI provider had a server outage, the OmniMetrics application went down with it, angering customers. The biggest blow came during a sales call with a massive enterprise prospect. The prospect's security officer asked a simple question: "When our HR managers ask your AI about employee salaries, does that data leave your servers?" When OmniMetrics admitted the data was sent to a third-party AI vendor, the deal died instantly. That was their turning point. OmniMetrics realized that AI features could not just be an external addon; they had to be built into the core of their own software. They needed a shift toward selfhosted AI orchestration. By bringing the "brain" of the operation inside their own walls, they could route highly sensitive HR questions to small, private AI models running securely on their own servers. They could save the expensive, external AI models only for questions that did not involve private data. The market is full of opensource AI orchestration tools that make this kind of smart traffic routing possible. For OmniMetrics, this wasn't just a tech upgrade; it was the only way to save their profit margins, pass strict security audits, and keep growing their business.

What Does the Orchestration Layer Actually Do?

Before we dive into the specific brands and tools, let's talk in plain English about what an orchestration layer actually does inside a B2B SaaS application. Think of an AI model like a brilliant but isolated chef in a kitchen. The chef can cook amazing meals, but they don't know what the customer ordered, they don't know where the ingredients are kept, and they don't know how to plate the food for the dining room. An orchestration layer is the head chef or the expediter. It sits right in the middle of your application, your databases, and the AI model itself. [4] When a user types a question into your software, that question rarely goes straight to the AI anymore. Instead, the orchestration layer intercepts it. It acts like a smart librarian, taking the user's question and searching your company's private databases to find the right background information. It gathers those "ingredients" and hands them to the AI model along with clear instructions on how to answer. But the job doesn't stop there. When the AI hands an answer back, the orchestration layer checks it. It makes sure the AI didn't hallucinate or make things up. It formats the text into a clean data structure so your software can display it nicely in a chart or a table. Advanced AI orchestration opensource frameworks even handle the AI's memory, ensuring it remembers what the user said five minutes ago. By using these tools, your engineers can build a clean, organized kitchen. And the best part? If a brand new, cheaper AI model comes out next month, you don't have to rebuild your whole application. You just swap out the "chef" while the rest of your kitchen keeps running smoothly.

Build Your Open-Source AI Infrastructure

Hundred Solutions helps SaaS companies architect and implement open-source AI orchestration systems that reduce costs and maintain data sovereignty.

Get Your Architecture Consultation →LangChain: The Swiss Army Knife for Complex Tasks

When developers start talking about this shift in how we build AI, LangChain is almost always the first name that comes up. Born straight out of the developer community, LangChain has quickly become the gold standard for teams that want to build applications that do more than just talk. The creators of LangChain figured out very quickly that large language models are much more useful when they can act as reasoning engines—basically, a brain that can use tools. [2] In the world of B2B software, you don't just want an AI that can write a poem; you want an AI that can read a customer's email, check their account status in Salesforce, update a ticket in Jira, and then draft a response. LangChain makes this possible using two main concepts: chains and agents. A chain is exactly what it sounds like—a predictable, step-by-step process. This is perfect for strict corporate environments where a process, like generating an end of month financial report, needs to happen the same way every time. Agents are a bit more flexible. You give an agent a goal and a toolbox, and it uses the AI's brain to figure out the steps it needs to take to get the job done. For a SaaS company, bringing LangChain into your software means you can build incredibly smart workflows that actually mimic how a human employee thinks and works. Because it is so popular, choosing this framework means your team will have access to massive amounts of tutorials and community support, cementing its place as a heavyweight in the world of open source AI orchestration. [1]

LlamaIndex: The Ultimate Librarian for Your Company Data

While LangChain is amazing at taking action and using tools, LlamaIndex tells a slightly different story. For the vast majority of B2B SaaS companies, the biggest selling point of an AI feature isn't getting it to use external tools; it is getting it to talk intelligently about the company's massive piles of messy, internal data. This specific challenge is called Retrieval-Augmented Generation (RAG), and LlamaIndex was built from the ground up to be the absolute best tool in the world for this exact job. Think about how complicated a company's data is. It lives in PDF contracts, messy Slack conversations, structured customer databases, and scattered Google Docs. LlamaIndex acts as the ultimate corporate librarian. It comes with dozens of prebuilt connectors that easily suck up all those different types of files and formats. Once it pulls the data in, it neatly organizes, indexes, and stores it so that an AI model can search through it in milliseconds. It focuses heavily on making sure the search process is highly accurate. By feeding the AI exactly the right paragraphs from your company's handbooks or customer histories, LlamaIndex drastically reduces the chances of the AI making a mistake or hallucinating a false answer. Among the many opensource AI orchestration tools out there, LlamaIndex stands out because it allows developers to build search systems that understand context. For a SaaS business whose entire value relies on managing client data safely and accurately, LlamaIndex provides the rock-solid foundation needed to turn boring, static files into a dynamic, conversational experience for the user.

Haystack by deepset: The Precision Builder for Search

The story behind Haystack, a tool built by the team at deepset, is one for the serious engineers who care deeply about reliability. Some frameworks try to do absolutely everything, chasing every new AI trend that pops up on Twitter. Haystack, on the other hand, stayed focused on its roots: natural language processing and incredibly accurate search. This focused history makes it a fantastic choice for B2B SaaS companies where transparency, speed, and stability are more important than flashy, unpredictable AI magic. Haystack treats building an AI pipeline like snapping together high-quality Lego blocks. Every single piece of your system—the part that stores documents, the part that searches for them, and the part that generates the final answer—is treated as a separate, interchangeable module. This kind of setup is a dream for senior software architects. It means your engineering team can test and tweak every single step of the process. If one specific part of your search isn't returning good results, you can pop that block out and replace it without breaking the rest of your application. Furthermore, Haystack has always been a huge champion of letting companies run smaller, specialized AI models right on their own hardware, making it a perfect fit for a strategy focused on selfhosted AI orchestration. By keeping things clear, modular, and transparent, Haystack lets SaaS companies build AI features they can trust, proving that you can take advantage of free AI orchestration without sacrificing the stability your enterprise customer's demand. [3]

Semantic Kernel: Microsoft's Bridge for Enterprise Teams

When a massive player like Microsoft enters the opensource world, people pay attention, and their Semantic Kernel framework is a huge milestone for the industry. The story of Semantic Kernel is all about building a sturdy bridge between the absolute bleeding edge of AI research and the traditional, secure coding environments that big corporations have used for decades. Most massive B2B SaaS platforms are not built on lightweight, experimental code; they rely on heavy-duty, enterprise languages like C# and Java. Semantic Kernel was designed specifically to speak those languages smoothly, bringing modern AI capabilities to massive, legacy software systems. Semantic Kernel uses concepts called plugins and planners. Think of plugins as standard operating procedures. They allow your developers to take your existing, old-school code and wrap it up into a neat package that the AI can understand and use. The planner is the brain of the operation. When a user asks a complex question, the planner looks at the request and automatically writes a step-by-step plan using those plugins to get the job done. This setup is incredibly attractive for large SaaS companies that already host their software on Microsoft Azure or have huge teams of C# developers. It gives them a familiar, highly secure way to start using AI orchestration opensource frameworks. It proves that you can bring powerful, generative AI into a giant, complex corporate codebase without having to tear down the walls and start over from scratch. [5]

How to Actually Implement This: A Step-by-Step Guide for SaaS Teams

Moving from reading about these frameworks to building them into your software requires a thoughtful, step-by-step approach. You can't just rip out your old code over the weekend. The journey toward better AI infrastructure starts with your technical leaders sitting down and defining exactly what problem you are trying to solve. Are you trying to build an AI that can do complex tasks and use external tools? If so, you should lean toward a framework focused on agents, like LangChain. Or are you trying to help your users search through thousands of private documents? If that is the case, a data-focused framework like LlamaIndex is the right move. Once you pick up your tool, the next step is getting your internal servers ready for the job. This usually involves setting up modern hosting environments, like Kubernetes, to manage the secure databases and AI models you will be running yourself. Your engineering team will need to build safe pipelines so that when they update the AI's instructions, they don't accidentally break the software for all your customers. Finally, you have to monitor everything. Because AI can sometimes be unpredictable, it is crucial to track every single step the AI takes. You need to know how long answers take to generate, how much money each prompt is costing, and whether the answers are actually accurate. By taking this slow, careful, engineering-first approach, a SaaS company can successfully move away from messy, experimental code and build a stable, scalable foundation for the future.

The Business Case: Saving Money and Winning Trust

At the end of the day, the decision to overhaul your technology isn't just about cool new code; it is a business decision driven by two massive factors: protecting your profit margins and winning the trust of your enterprise customers. If you rely entirely on proprietary, black-box AI vendors, your software costs become dangerously unpredictable. Every time your users engage with your AI features, your company gets charged. If the feature becomes incredibly popular, your API bills will explode, eating right into your profits. By integrating opensource AI orchestration tools, you can implement a strategy called semantic routing. This is a game-changer. It means your system can automatically look at a user's prompt and decide how hard it is. Simple questions are routed to small, highly efficient AI models that you host yourself at virtually no cost. Only the truly complex, difficult questions are sent out to the expensive, paid APIs. This financial strategy of using free AI orchestration goes together with data security. In the B2B world, keeping your clients' data safe is everything. Giant enterprise customers simply will not sign a contract if they know their private financial or employee data is being sent over the internet to a third-party AI company. Building a system around selfhosted AI orchestration solves this completely. It allows your company to keep total control over the entire data lifecycle. The sensitive information never leaves your secure databases, and the AI processes the questions right inside your own isolated servers. Having this architecture in place makes passing rigorous security audits a breeze, helps your sales team close bigger deals faster, and proves to the market that your software is not just smart, but incredibly safe.

Conclusion: Taking Back Control of Your AI Roadmap

The days of simply slapping an API wrapper on a language model and calling it an enterprise AI product are officially over. The future of B2B SaaS belongs to the companies that have the technical maturity to actually own and manage their intelligent systems. Moving opensource middleware is no longer just a fun experiment for your developers; it is necessary if you want to build an application that can scale up without bankrupting your business. The opensource community is moving incredibly fast, and these tools are only going to get easier to use, smarter, and more reliable. Companies that take the leap into free AI orchestration right now are actively protecting themselves against the risk of vendor lock-in and unpredictable price hikes from major tech giants. By rolling up your sleeves, choosing the right tools, and committing to building a proper, in-house foundation, your SaaS company can evolve. You can transform from being just another customer renting space on someone else's AI network into a true architect of your own technology, ready to offer your customers the smartest, fastest, and most secure experience possible.

Frequently Asked Questions

Think of open source AI orchestration as the smart middleman or the head chef in your software application. Instead of just passing a user's question blindly to an AI model, it manages a whole checklist of tasks first. It takes the question, searches your company's private database for the right background information, packages that information with strict instructions, and then sends it to the AI. When the AI answers, this middleman checks the work, formats it nicely into a table or chart, and presents it to the user. It keeps everything organized, secure, and running smoothly behind the scenes.

Direct API integrations are great when you are just building a quick prototype, but they become a nightmare as your company grows. Relying solely on direct connections means your app is totally dependent on one external vendor. If they raise their prices or their servers go down, you are out of luck, and rewriting your code to use a new vendor takes months. Open source AI orchestration tools solve this by decoupling your software from the AI model. It allows your engineers to swap out AI models like changing a lightbulb, intelligently route traffic, and protect your software from breaking as the technology changes.

Absolutely. Relying purely on external AI vendors means paying for every single word generated, which gets incredibly expensive as more people use your software. Using free AI orchestration allows you to act as a traffic controller. Your system can look at a simple user request—like formatting a date or summarizing a short paragraph—and send it to a small, completely free AI model hosted on your own servers. You save the expensive, paid AI calls strictly for the really difficult, complex reasoning tasks, which dramatically lowers your monthly operating costs.

When you sell software to big hospitals, banks, or corporate enterprises, they have strict rules (like SOC 2 or HIPAA) about where their data can go. Sending their private data over the internet to a massive, external AI company is often an immediate dealbreaker. Using self-hosted AI orchestration allows you to keep the AI model, the orchestration framework, and the databases entirely inside your own secure, private network. Because the data never leaves your servers to get an answer, you can guarantee your clients' privacy and easily pass those tough security audits.

While both are amazing tools, they are built for different main jobs. LangChain is best described as an action-oriented framework; it is perfect for building AI agents that need to use external tools, make decisions, and execute multi-step tasks like checking an inventory database and then sending an email. LlamaIndex, on the other hand, is a data librarian. It is specifically designed to read, organize, and search through massive amounts of messy company documents (like PDFs or Slack chats) to ensure the AI gives highly accurate answers based on your private data.

While it adds a new layer of work for your engineers, modern AI orchestration open-source frameworks are specifically designed to be accessible. You do not need a massive team of PhD AI researchers to get started. The open-source community has provided incredible step-by-step guides, prebuilt templates, and easy-to-use tools that lower the barrier to entry significantly. A standard, capable engineering team can start small by moving just one feature to an open-source pipeline, learning the ropes, and scaling up gradually without overwhelming their daily workflow.

Open-Source AI Orchestration Tools: Complete Guide

Table of Contents

The phase of plugging a single AI API into your product doesn't last long. As usage grows, costs spike, latency becomes unpredictable, and security teams start asking hard questions about where sensitive data is flowing. That's where opensource AI orchestration changes the equation. Instead of wiring your application directly to external models, an orchestration layer introduces structure—routing requests intelligently, grounding responses in private data, managing memory, enforcing guardrails, and allowing you to swap models without rewriting your entire stack. This guide explores the leading opensource AI orchestration tools from LangChain and LlamaIndex to Haystack and Semantic Kernel and explains how to choose the right framework based on your architecture and goals. Whether you're pursuing selfhosted AI orchestration for stronger data control or looking to reduce API spend through smarter routing, adopting opensource AI orchestration infrastructure gives your team flexibility, cost control, and long term ownership of your AI roadmap.

Think back to the early days of the generative AI boom. Adding AI to a product felt almost like magic, and the process was deceptively simple. Your engineering team would grab an API key from a major provider like OpenAI or Anthropic, write a few quick lines of code to pass a user's prompt over to their servers, and wait for the intelligent text to flow back into your application. It was an incredible party trick that looked great in a product demo. But as these simple prototypes grew up into real, production ready enterprise tools, a harsh reality set in. Real B2B applications need more than just a clever chatbot. They need to ground the AI's answers in your company's private data, a process known as retrieval augmented generation (RAG). They need to remember past conversations, chain multiple complex prompts together, and format the AI's output perfectly so your software can read it. Suddenly, that clean, simple codebase turned into a tangled mess of custom scripts and fragile integrations that broke every time a user asked a weird question. This is the exact moment when the critical need for open source AI orchestration becomes obvious. By putting a standardized management layer between your software and the AI models, your team can finally take back control. Software companies are quickly moving toward these opensource solutions to escape the high costs and strict limitations of proprietary vendors. Instead of renting someone else's infrastructure, they are building custom, flexible systems that can grow with their customer base. What is more, looking into free AI orchestration is not just about pinching pennies; it is about protecting your intellectual property and keeping your clients' sensitive data safe on your own servers. In this guide, we are going to walk through the real world story of a SaaS company upgrading its architecture, look at the best frameworks available today, and map out exactly how you can implement these tools in your own business.

The Breaking Point: Why Simple API Calls Fail in B2B

To really grasp why these frameworks are life savers, let us look at the story of a fictional, but very realistic, B2B SaaS company called OmniMetrics. OmniMetrics builds analytics software for human resources departments. A year ago, their leadership decided they needed an AI assistant to help users search through complex employee datasets. Their engineers took the easy route first, plugging in a direct API to a massive, external language model. At first, launch was a huge success. But as thousands of users started asking the AI to summarize massive quarterly reports, things started to crack. The monthly bill from their AI provider skyrocketed because they were paying for every single word generated. When the external AI provider had a server outage, the OmniMetrics application went down with it, angering customers. The biggest blow came during a sales call with a massive enterprise prospect. The prospect's security officer asked a simple question: "When our HR managers ask your AI about employee salaries, does that data leave your servers?" When OmniMetrics admitted the data was sent to a third-party AI vendor, the deal died instantly. That was their turning point. OmniMetrics realized that AI features could not just be an external addon; they had to be built into the core of their own software. They needed a shift toward selfhosted AI orchestration. By bringing the "brain" of the operation inside their own walls, they could route highly sensitive HR questions to small, private AI models running securely on their own servers. They could save the expensive, external AI models only for questions that did not involve private data. The market is full of opensource AI orchestration tools that make this kind of smart traffic routing possible. For OmniMetrics, this wasn't just a tech upgrade; it was the only way to save their profit margins, pass strict security audits, and keep growing their business.

What Does the Orchestration Layer Actually Do?

Before we dive into the specific brands and tools, let's talk in plain English about what an orchestration layer actually does inside a B2B SaaS application. Think of an AI model like a brilliant but isolated chef in a kitchen. The chef can cook amazing meals, but they don't know what the customer ordered, they don't know where the ingredients are kept, and they don't know how to plate the food for the dining room. An orchestration layer is the head chef or the expediter. It sits right in the middle of your application, your databases, and the AI model itself. [4] When a user types a question into your software, that question rarely goes straight to the AI anymore. Instead, the orchestration layer intercepts it. It acts like a smart librarian, taking the user's question and searching your company's private databases to find the right background information. It gathers those "ingredients" and hands them to the AI model along with clear instructions on how to answer. But the job doesn't stop there. When the AI hands an answer back, the orchestration layer checks it. It makes sure the AI didn't hallucinate or make things up. It formats the text into a clean data structure so your software can display it nicely in a chart or a table. Advanced AI orchestration opensource frameworks even handle the AI's memory, ensuring it remembers what the user said five minutes ago. By using these tools, your engineers can build a clean, organized kitchen. And the best part? If a brand new, cheaper AI model comes out next month, you don't have to rebuild your whole application. You just swap out the "chef" while the rest of your kitchen keeps running smoothly.

Build Your Open-Source AI Infrastructure

Hundred Solutions helps SaaS companies architect and implement open-source AI orchestration systems that reduce costs and maintain data sovereignty.

Get Your Architecture Consultation →LangChain: The Swiss Army Knife for Complex Tasks

When developers start talking about this shift in how we build AI, LangChain is almost always the first name that comes up. Born straight out of the developer community, LangChain has quickly become the gold standard for teams that want to build applications that do more than just talk. The creators of LangChain figured out very quickly that large language models are much more useful when they can act as reasoning engines—basically, a brain that can use tools. [2] In the world of B2B software, you don't just want an AI that can write a poem; you want an AI that can read a customer's email, check their account status in Salesforce, update a ticket in Jira, and then draft a response. LangChain makes this possible using two main concepts: chains and agents. A chain is exactly what it sounds like—a predictable, step-by-step process. This is perfect for strict corporate environments where a process, like generating an end of month financial report, needs to happen the same way every time. Agents are a bit more flexible. You give an agent a goal and a toolbox, and it uses the AI's brain to figure out the steps it needs to take to get the job done. For a SaaS company, bringing LangChain into your software means you can build incredibly smart workflows that actually mimic how a human employee thinks and works. Because it is so popular, choosing this framework means your team will have access to massive amounts of tutorials and community support, cementing its place as a heavyweight in the world of open source AI orchestration. [1]

LlamaIndex: The Ultimate Librarian for Your Company Data

While LangChain is amazing at taking action and using tools, LlamaIndex tells a slightly different story. For the vast majority of B2B SaaS companies, the biggest selling point of an AI feature isn't getting it to use external tools; it is getting it to talk intelligently about the company's massive piles of messy, internal data. This specific challenge is called Retrieval-Augmented Generation (RAG), and LlamaIndex was built from the ground up to be the absolute best tool in the world for this exact job. Think about how complicated a company's data is. It lives in PDF contracts, messy Slack conversations, structured customer databases, and scattered Google Docs. LlamaIndex acts as the ultimate corporate librarian. It comes with dozens of prebuilt connectors that easily suck up all those different types of files and formats. Once it pulls the data in, it neatly organizes, indexes, and stores it so that an AI model can search through it in milliseconds. It focuses heavily on making sure the search process is highly accurate. By feeding the AI exactly the right paragraphs from your company's handbooks or customer histories, LlamaIndex drastically reduces the chances of the AI making a mistake or hallucinating a false answer. Among the many opensource AI orchestration tools out there, LlamaIndex stands out because it allows developers to build search systems that understand context. For a SaaS business whose entire value relies on managing client data safely and accurately, LlamaIndex provides the rock-solid foundation needed to turn boring, static files into a dynamic, conversational experience for the user.

Haystack by deepset: The Precision Builder for Search

The story behind Haystack, a tool built by the team at deepset, is one for the serious engineers who care deeply about reliability. Some frameworks try to do absolutely everything, chasing every new AI trend that pops up on Twitter. Haystack, on the other hand, stayed focused on its roots: natural language processing and incredibly accurate search. This focused history makes it a fantastic choice for B2B SaaS companies where transparency, speed, and stability are more important than flashy, unpredictable AI magic. Haystack treats building an AI pipeline like snapping together high-quality Lego blocks. Every single piece of your system—the part that stores documents, the part that searches for them, and the part that generates the final answer—is treated as a separate, interchangeable module. This kind of setup is a dream for senior software architects. It means your engineering team can test and tweak every single step of the process. If one specific part of your search isn't returning good results, you can pop that block out and replace it without breaking the rest of your application. Furthermore, Haystack has always been a huge champion of letting companies run smaller, specialized AI models right on their own hardware, making it a perfect fit for a strategy focused on selfhosted AI orchestration. By keeping things clear, modular, and transparent, Haystack lets SaaS companies build AI features they can trust, proving that you can take advantage of free AI orchestration without sacrificing the stability your enterprise customer's demand. [3]

Semantic Kernel: Microsoft's Bridge for Enterprise Teams

When a massive player like Microsoft enters the opensource world, people pay attention, and their Semantic Kernel framework is a huge milestone for the industry. The story of Semantic Kernel is all about building a sturdy bridge between the absolute bleeding edge of AI research and the traditional, secure coding environments that big corporations have used for decades. Most massive B2B SaaS platforms are not built on lightweight, experimental code; they rely on heavy-duty, enterprise languages like C# and Java. Semantic Kernel was designed specifically to speak those languages smoothly, bringing modern AI capabilities to massive, legacy software systems. Semantic Kernel uses concepts called plugins and planners. Think of plugins as standard operating procedures. They allow your developers to take your existing, old-school code and wrap it up into a neat package that the AI can understand and use. The planner is the brain of the operation. When a user asks a complex question, the planner looks at the request and automatically writes a step-by-step plan using those plugins to get the job done. This setup is incredibly attractive for large SaaS companies that already host their software on Microsoft Azure or have huge teams of C# developers. It gives them a familiar, highly secure way to start using AI orchestration opensource frameworks. It proves that you can bring powerful, generative AI into a giant, complex corporate codebase without having to tear down the walls and start over from scratch. [5]

How to Actually Implement This: A Step-by-Step Guide for SaaS Teams

Moving from reading about these frameworks to building them into your software requires a thoughtful, step-by-step approach. You can't just rip out your old code over the weekend. The journey toward better AI infrastructure starts with your technical leaders sitting down and defining exactly what problem you are trying to solve. Are you trying to build an AI that can do complex tasks and use external tools? If so, you should lean toward a framework focused on agents, like LangChain. Or are you trying to help your users search through thousands of private documents? If that is the case, a data-focused framework like LlamaIndex is the right move. Once you pick up your tool, the next step is getting your internal servers ready for the job. This usually involves setting up modern hosting environments, like Kubernetes, to manage the secure databases and AI models you will be running yourself. Your engineering team will need to build safe pipelines so that when they update the AI's instructions, they don't accidentally break the software for all your customers. Finally, you have to monitor everything. Because AI can sometimes be unpredictable, it is crucial to track every single step the AI takes. You need to know how long answers take to generate, how much money each prompt is costing, and whether the answers are actually accurate. By taking this slow, careful, engineering-first approach, a SaaS company can successfully move away from messy, experimental code and build a stable, scalable foundation for the future.

The Business Case: Saving Money and Winning Trust

At the end of the day, the decision to overhaul your technology isn't just about cool new code; it is a business decision driven by two massive factors: protecting your profit margins and winning the trust of your enterprise customers. If you rely entirely on proprietary, black-box AI vendors, your software costs become dangerously unpredictable. Every time your users engage with your AI features, your company gets charged. If the feature becomes incredibly popular, your API bills will explode, eating right into your profits. By integrating opensource AI orchestration tools, you can implement a strategy called semantic routing. This is a game-changer. It means your system can automatically look at a user's prompt and decide how hard it is. Simple questions are routed to small, highly efficient AI models that you host yourself at virtually no cost. Only the truly complex, difficult questions are sent out to the expensive, paid APIs. This financial strategy of using free AI orchestration goes together with data security. In the B2B world, keeping your clients' data safe is everything. Giant enterprise customers simply will not sign a contract if they know their private financial or employee data is being sent over the internet to a third-party AI company. Building a system around selfhosted AI orchestration solves this completely. It allows your company to keep total control over the entire data lifecycle. The sensitive information never leaves your secure databases, and the AI processes the questions right inside your own isolated servers. Having this architecture in place makes passing rigorous security audits a breeze, helps your sales team close bigger deals faster, and proves to the market that your software is not just smart, but incredibly safe.

Conclusion: Taking Back Control of Your AI Roadmap

The days of simply slapping an API wrapper on a language model and calling it an enterprise AI product are officially over. The future of B2B SaaS belongs to the companies that have the technical maturity to actually own and manage their intelligent systems. Moving opensource middleware is no longer just a fun experiment for your developers; it is necessary if you want to build an application that can scale up without bankrupting your business. The opensource community is moving incredibly fast, and these tools are only going to get easier to use, smarter, and more reliable. Companies that take the leap into free AI orchestration right now are actively protecting themselves against the risk of vendor lock-in and unpredictable price hikes from major tech giants. By rolling up your sleeves, choosing the right tools, and committing to building a proper, in-house foundation, your SaaS company can evolve. You can transform from being just another customer renting space on someone else's AI network into a true architect of your own technology, ready to offer your customers the smartest, fastest, and most secure experience possible.

Frequently Asked Questions

Think of open source AI orchestration as the smart middleman or the head chef in your software application. Instead of just passing a user's question blindly to an AI model, it manages a whole checklist of tasks first. It takes the question, searches your company's private database for the right background information, packages that information with strict instructions, and then sends it to the AI. When the AI answers, this middleman checks the work, formats it nicely into a table or chart, and presents it to the user. It keeps everything organized, secure, and running smoothly behind the scenes.

Direct API integrations are great when you are just building a quick prototype, but they become a nightmare as your company grows. Relying solely on direct connections means your app is totally dependent on one external vendor. If they raise their prices or their servers go down, you are out of luck, and rewriting your code to use a new vendor takes months. Open source AI orchestration tools solve this by decoupling your software from the AI model. It allows your engineers to swap out AI models like changing a lightbulb, intelligently route traffic, and protect your software from breaking as the technology changes.

Absolutely. Relying purely on external AI vendors means paying for every single word generated, which gets incredibly expensive as more people use your software. Using free AI orchestration allows you to act as a traffic controller. Your system can look at a simple user request—like formatting a date or summarizing a short paragraph—and send it to a small, completely free AI model hosted on your own servers. You save the expensive, paid AI calls strictly for the really difficult, complex reasoning tasks, which dramatically lowers your monthly operating costs.

When you sell software to big hospitals, banks, or corporate enterprises, they have strict rules (like SOC 2 or HIPAA) about where their data can go. Sending their private data over the internet to a massive, external AI company is often an immediate dealbreaker. Using self-hosted AI orchestration allows you to keep the AI model, the orchestration framework, and the databases entirely inside your own secure, private network. Because the data never leaves your servers to get an answer, you can guarantee your clients' privacy and easily pass those tough security audits.

While both are amazing tools, they are built for different main jobs. LangChain is best described as an action-oriented framework; it is perfect for building AI agents that need to use external tools, make decisions, and execute multi-step tasks like checking an inventory database and then sending an email. LlamaIndex, on the other hand, is a data librarian. It is specifically designed to read, organize, and search through massive amounts of messy company documents (like PDFs or Slack chats) to ensure the AI gives highly accurate answers based on your private data.

While it adds a new layer of work for your engineers, modern AI orchestration open-source frameworks are specifically designed to be accessible. You do not need a massive team of PhD AI researchers to get started. The open-source community has provided incredible step-by-step guides, prebuilt templates, and easy-to-use tools that lower the barrier to entry significantly. A standard, capable engineering team can start small by moving just one feature to an open-source pipeline, learning the ropes, and scaling up gradually without overwhelming their daily workflow.

Opensource AI Orchestration: Top Frameworks and How to Get Started